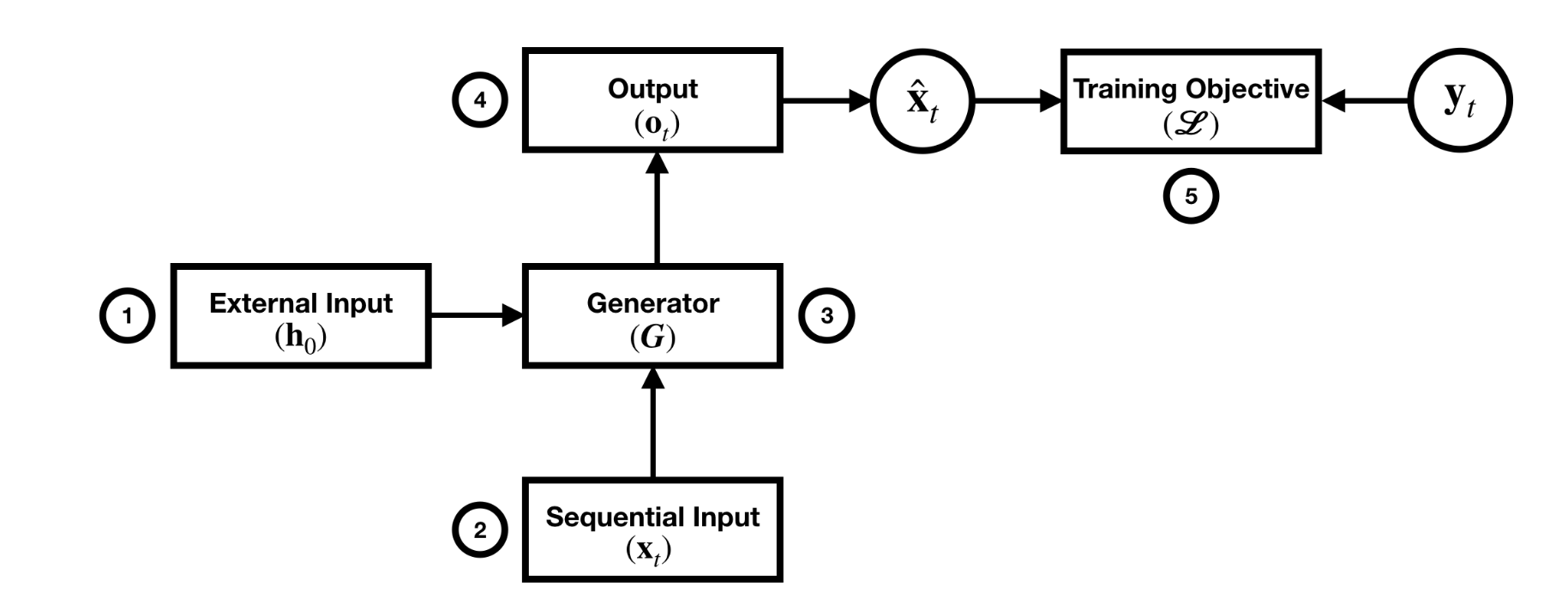

![PDF] A Survey of Controllable Text Generation using Transformer-based Pre-trained Language Models | Semantic Scholar PDF] A Survey of Controllable Text Generation using Transformer-based Pre-trained Language Models | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/723fcade538f71df5fe5d1cde279686240f97b9f/4-Figure2-1.png)

PDF] A Survey of Controllable Text Generation using Transformer-based Pre-trained Language Models | Semantic Scholar

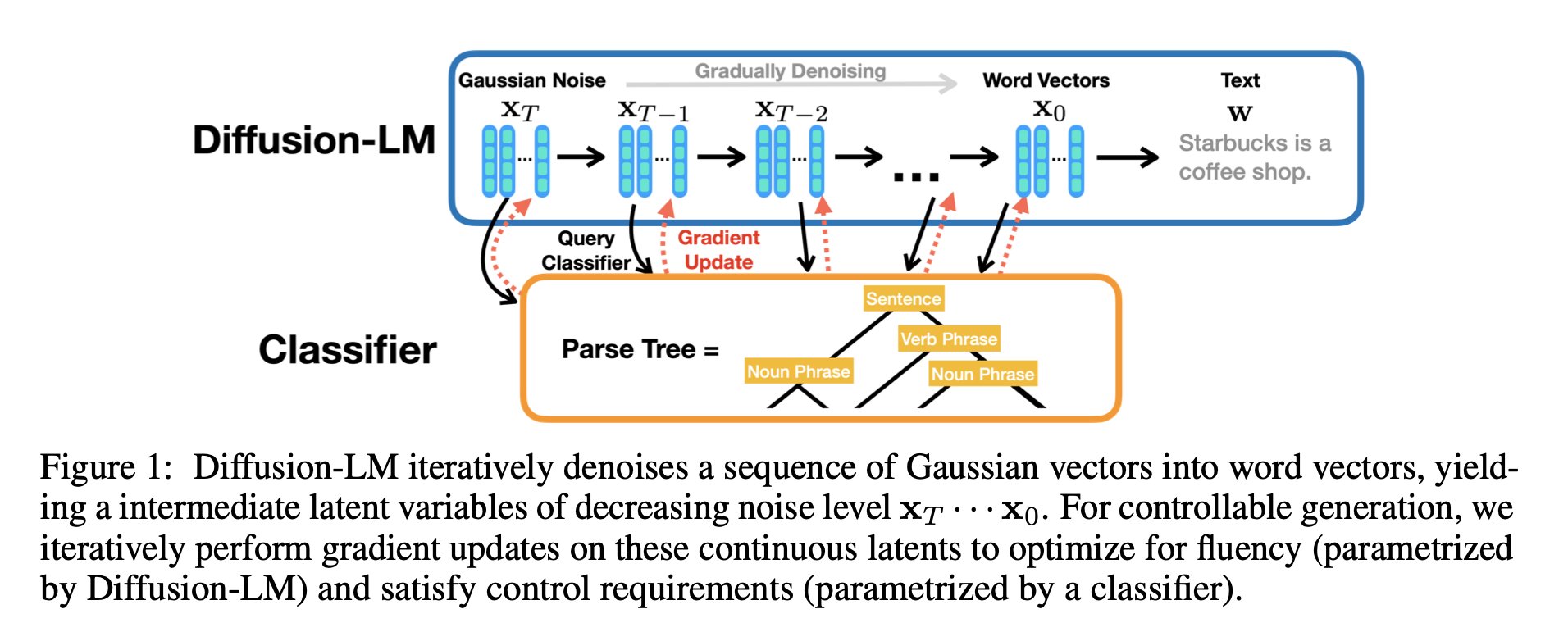

Xiang Lisa Li on Twitter: "https://t.co/sUXsgBxOAH We propose Diffusion-LM, a non-autoregressive language model based on continuous diffusions. It enables complex controllable generation. We can steer the LM to generate text with desired

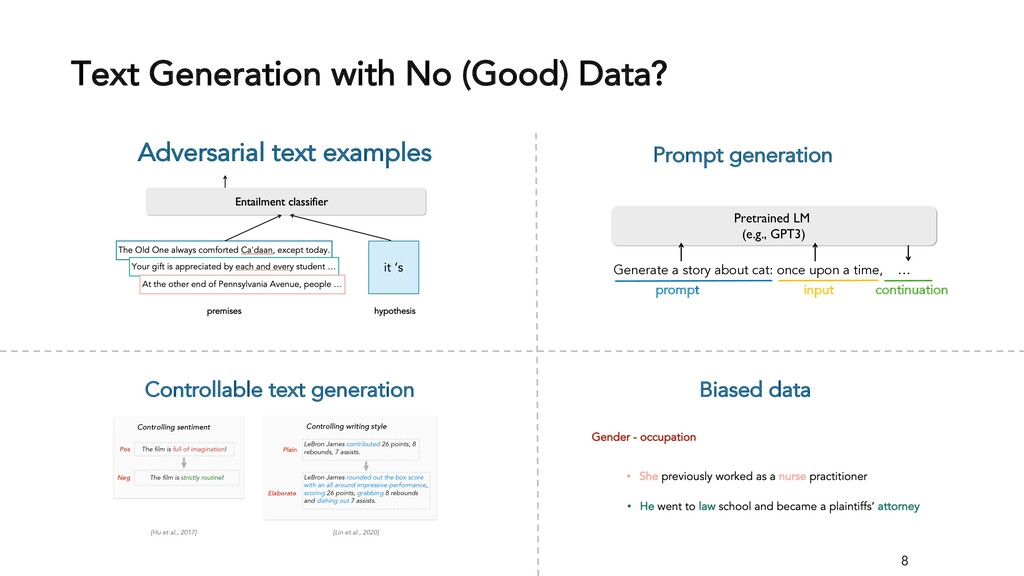

![PDF] Exploring Controllable Text Generation Techniques | Semantic Scholar PDF] Exploring Controllable Text Generation Techniques | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/fcc2858d5013dfaf92cf478b6f3af4c63b92c6d9/2-Figure1-1.png)

![PDF] A Causal Lens for Controllable Text Generation | Semantic Scholar PDF] A Causal Lens for Controllable Text Generation | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/21adf4285eb85f4a50106b84906f41c2bd68d510/2-Figure1-1.png)

![PDF] Quark: Controllable Text Generation with Reinforced Unlearning | Semantic Scholar PDF] Quark: Controllable Text Generation with Reinforced Unlearning | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/023edab4738690444e3924e224c2641017a0d794/2-Figure1-1.png)

![PDF] Controllable text generation | Semantic Scholar PDF] Controllable text generation | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/5d13a82b82fd5f9f4ae30666dc568414907edc39/1-Figure2-1.png)

![PDF] Controllable Text Generation | Semantic Scholar PDF] Controllable Text Generation | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/efbc200feab74e5087c4005d8759e5dadb3a3077/8-Table2-1.png)

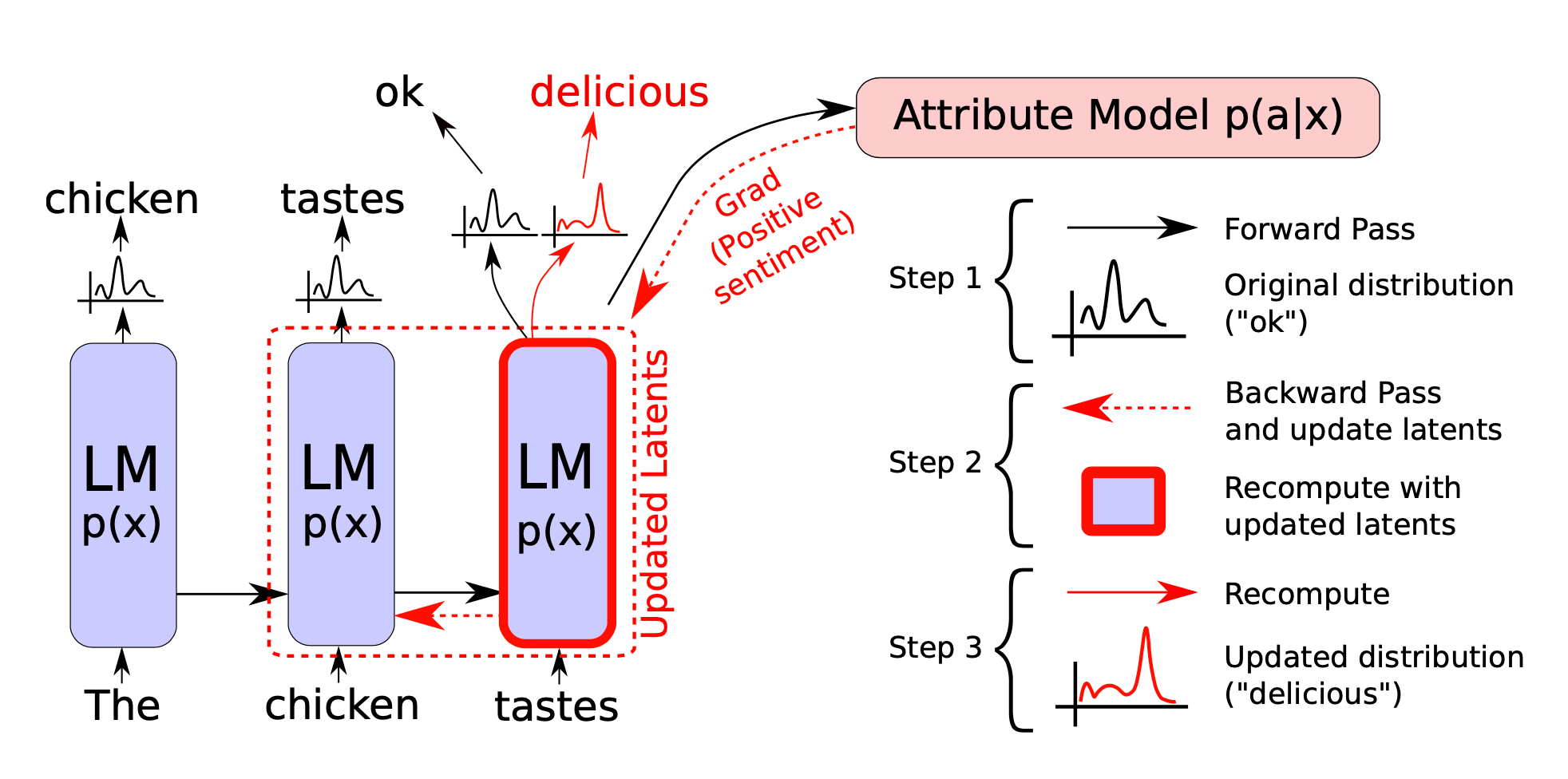

![PDF] Controllable Text Generation | Semantic Scholar PDF] Controllable Text Generation | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/efbc200feab74e5087c4005d8759e5dadb3a3077/3-Figure1-1.png)